HPC - In-house Cluster for High-performance Parallel Computing¶

Guides¶

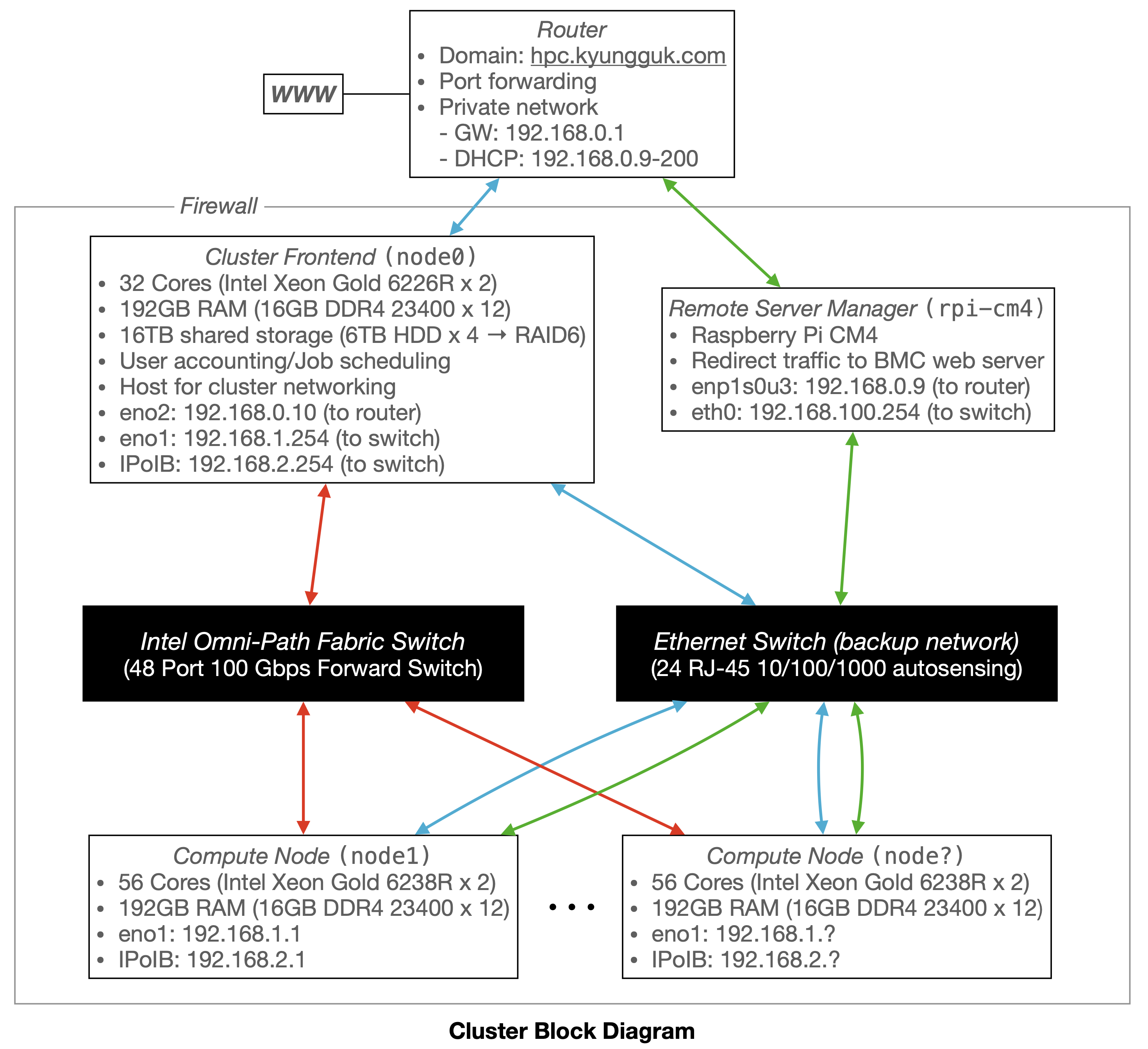

Cluster Configuration¶

Service Logs¶

-

2022/04/25 - Node 1 faulty memory replacement

Two faulty memory sticks from slots CPU1_DIMM_C1 (not sure; maybe B1) and CPU2_DIMM_B1 have been replaced. In addition, CPU1_DIMM_A1 has also been replaced while diagnosing the faulty memory slot location.

-

2022/12/19 - Node 8 faulty memory replacement

System Event Log:

EventID:0074 Time:Wed Nov 30 20:57:31 2022 Controller:SMI Handler SensorType:Memory SensorName:Mem err Sensor Description: Post Package Repair Runtime Request. Rank: 0 CPU: 1 DIMM: E1. - OEM - AssertedOne (or multiple?) faulty memory replaced.

-

2023/05/10 - Node 6 memory ECC error (E1)

System Event Log:

EventID:0587 Time:Sat Apr 29 18:17:27 2023 Controller:SMI Handler SensorType:Memory SensorName:Mmry ECC Sensor Description: CPU: 1, DIMM: E1 DIMM Rank: 1. - Correctable ECC / other correctable memory error - Asserted EventID:0588 Time:Sun Apr 30 17:50:19 2023 Controller:SMI Handler SensorType:Memory SensorName:Mmry ECC Sensor Description: CPU: 1, DIMM: E1 DIMM Rank: 0. - Correctable ECC / other correctable memory error - AssertedOne faulty memory from CPU1_DIMM_E1 has been replaced.

-

2023/05/10 - Node 9 Omni-Path PCIe speed issue

It was identified that the Omni-Path NIC only used 1x lane out of 16x, resulting in a reduction in MPI communication speed. The solution was to move the NIC card to another riser slot. It was unclear as to why the first riser slot only supports 1x speed.